Chair's Corner: Kristen Rottinghaus (Kansas)

How quickly time flies. I can’t believe it’s already been six months since I started my term as chair. For any of you who know me, I’m basically always cold. So I’m especially excited to usher in the warm weather and sunny days of summer. For many of us, legislative session is over and summer marks the start of catching up on things on the backburner. It’s also the season of office projects, professional development training, and family vacations.

Regardless of where you’re at and what your to-do list looks like, I hope this letter finds you doing well and looking forward to an enjoyable summer. Thanks to the hard work and unwavering support of my friends on the Executive Committee, we’ve accomplished a lot and still have more exciting things in store.

- Staff Hub ATL 2022, the fall professional development seminar, will be in Atlanta from October 10-12. NLPES is joining five other staff sections at the conference — leadership staff, research librarians, information and communications staff, fiscal staff, and research, editorial, and legal staff. Please stay tuned for additional information from Brenda Erickson about the agenda and registration very soon. The mix of legislative experiences will provide tremendous opportunities for networking and sharing ideas. On behalf of the entire Executive Committee, we hope to see you there! And another quick plug: The NCSL Legislative Summit is August 1-3 in Denver.

- We also have plenty of professional development opportunities in the works. We’ve made a concerted effort to provide more frequent virtual trainings this year, offering webinars or information sharing calls nearly every month. We hope you’ve all found value in the virtual programming so far and welcome your feedback. We’ve still got several more training opportunities planned for the remainder of this year so please check the 2022 training calendar periodically for updates and registration information.

- The new and improved Audit Report Database is now live. After several years of work and piloting, the Executive Committee is excited to offer a more user-friendly version of the report database. We hope it quickly becomes a go-to resource for your offices to find the work of your colleagues on specific topics.

- Congratulations to the 2022 NLPES award winners and to everyone who volunteered their time as a judge. It’s exciting to see how much great work our legislative evaluation offices do and is personally humbling to be part of such a talented group of professionals.

- Congratulations also are in order for the four individuals who will be joining the 2022-2023 Executive Committee. We’re happy to welcome Darren McDivitt (Texas), Marcus Morgan (Alabama), Micaela Fischer (New Mexico), and Ryan Langrill (Idaho), who will begin their terms this fall. At the same time, we are sad to say goodbye to four members whose terms will be ending: Emily Johnson (Texas), Kiernan McGorty (North Carolina), Mary Jo Koschay (Michigan), and Paul Navarro (California). Thank you to each of you for your service to this committee. We wouldn’t have been able to accomplish everything we have without your efforts and creativity.

As my time chairing the committee comes to a close, I want to thank the members of the Executive Committee and Brenda Erickson for their continued commitment to NLPES.

I’d also like to thank each of you as NLPES members for your involvement. We’ve got a pretty unique job and it’s nice to have the advice, wisdom, and support of colleagues to count on. It’s been my pleasure and privilege to serve the committee and I look forward to seeing all of the wonderful things this group will continue to accomplish!

Kristen Rottinghaus is the 2021–2022 NLPES Executive Committee Chair.

Research & Report Roundup

Report Spotlight: Tennessee Promise

Lauren Spires, Tennessee

In 2014, the Tennessee General Assembly adopted legislation creating the Tennessee Promise Scholarship, giving recent high school graduates an opportunity to earn an associate degree or technical diploma free of tuition and mandatory fees. The main eligibility requirement is residency in Tennessee; this differs from other scholarships which may include financial need and academic merit criteria.

Community-based organizations provide a paid or volunteer mentor to assist each applicant with the college application and financial aid process. Promise students must begin an eligible degree program in the fall semester immediately after high school graduation. Students may receive the scholarship for up to five semesters or eight trimesters, or until they earn a diploma or associate degree, whichever occurs first.

Tennessee Promise is part of a larger statewide Drive to 55 initiative, which aims to equip 55 percent of Tennesseans with a postsecondary credential by 2025 to meet anticipated workforce demand. Tennessee Promise supports two of the initiative’s focus areas, college access and completion, for recent high school graduates.

State law requires the Comptroller’s Office of Research and Education Accountability (OREA) to review, study, and determine the effectiveness of the Tennessee Promise Scholarship on a recurring basis. OREA published its first evaluation in July 2020.

Measuring program effectiveness: College access and completion

The research process took place over two years and included more than 25 interviews with state and local stakeholders, surveys of college administrators, and analysis of data from multiple sources (such as Tennessee Department of Education, Tennessee Higher Education Commission, American Community Survey, and the Lumina Foundation).

The effectiveness of Tennessee Promise was measured by evaluating the program’s two objectives, college access and completion. OREA measured access by college-going rates (i.e., the number of high school graduates who enroll in college immediately following graduation) and completion by credit hour accumulation, year-to-year retention, and degree attainment. OREA also analyzed these measures by student subgroup. Students from certain racial, gender, geographic, and socioeconomic subgroups have historically been less likely to enroll, persist, and earn a postsecondary credential, so the degree to which access and completion rates could increase for these subgroups is considerable.

OREA also evaluated the processes applicants must follow to become and remain Promise students, using measurements such as program application and Free Application for Federal Student Aid filing rates, mandatory meeting attendance, community service completion, and postsecondary enrollment. Once enrolled in higher education, students must meet additional requirements to maintain Promise eligibility, such as completing community service hours each semester as well as maintaining a 2.0 cumulative GPA and full-time continuous enrollment.

OREA analyzed available data to measure success and identify the program rules most often missed by Promise applicants. Researchers used STATA to conduct statistical analyses including a simple linear regression, binary logistical regression, and logistic regression to measure Promise student performance against other recent high school graduates who were enrolled in college but not participating in the program.

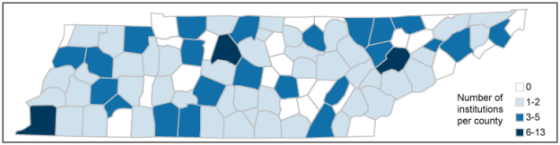

The report also highlighted the issue of transportation and proximity. To analyze the distance Promise students must travel to reach the nearest eligible institution, OREA compared the addresses of all 371 Tennessee public high schools with all Promise-eligible institutions (including satellite campuses). Public high schools are a reasonable proxy to use in calculating how far students must travel to attend the nearest eligible institution. OREA conducted three different analyses, each accounting for the variance among eligible institutions (i.e., program and course offerings, cost to student, and enrollment capacity), to determine the distance to reach a Promise-eligible institution.

OREA also calculated the average amount Promise students must pay after the last-dollar scholarship is applied to their tuition and mandatory fees, analyzed the biggest drop-off points in the application process, and projected the necessary growth in the state’s postsecondary attainment rate.

Results

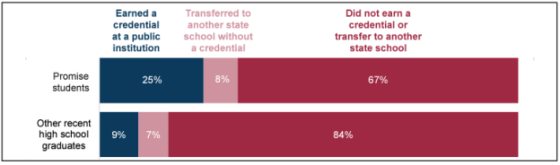

Controlling for ACT scores, race, gender, expected family contribution, and first-generation college student status, Promise students were about two times more likely to return for sophomore year and earn a credential than other recent high school graduates enrolled at Promise-eligible schools.

Exhibit 1: Promise students were more likely to earn a credential in five semesters compared to other recent high school graduates

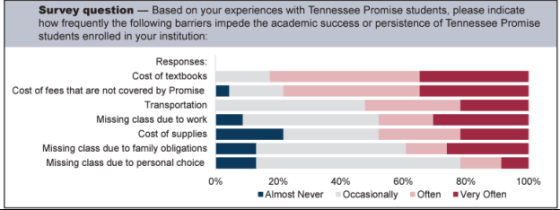

While Tennessee Promise ensures students can attend college free of tuition and mandatory fees, it does not cover other expenses such as books, supplies, tools, and non-mandatory fees (e.g., online course fees, science lab fees). Community college administrators estimated that Promise students pay an average of $1,150 each year for these items. Moreover, 75 percent of survey respondents said the cost of textbooks, fees, tools, and supplies not covered by Promise impede the academic success and persistence of Promise students enrolled at their institution “often” or “very often.” Almost all respondents indicated that at least half of students did not know that the Promise program, though promoted as “free college,” does not pay for these items.

Exhibit 2: The cost of textbooks and fees not covered by the Promise scholarship were identified as the most common barriers to academic success and persistence by college administrators

OREA found that students who live in certain areas of the state must travel further to reach a Promise-eligible institution. This could impact both college access and completion because research has shown that students who live over 30 minutes from their college are less likely to stay enrolled or obtain a credential. Specifically, there are 76 public Tennessee high schools located more than 30 minutes from the nearest community college campus; on average, 42.5 minutes (or 29 miles) separates these high schools from the nearest community college campus. Five of these high schools are located about an hour or more away, with the maximum distance being 74 minutes (or 49 miles).

Exhibit 3: There are areas of Tennessee where students must travel longer distances to attend a Promise-eligible institution

The report concluded that the Promise program works, but more students from various subgroups and rural parts of the state must enter and remain in the program for Tennessee to meet its statewide Drive to 55 goal.

Lauren Spires is a principal legislative research analyst and the higher education resource officer in the Tennessee Comptroller’s Office of Research and Education Accountability.

(Editor’s note: The Tennessee Promise report won a 2021 NLPES Excellence in Research Methods award.)

NCSL's Center for Results-Driven Governing

Brenda Erickson and Kristine Goodwin, NCSL

Evidence-based policymaking is the process of using high-quality information to improve decision-making about government policies. Momentum for “evidence-based policymaking” began in the 1970s and resurged in the 1990s.

Evidence-based policymaking requires evidence to be generated and available for policymakers to use. Unfortunately, one major challenge in using more evidence in policy deliberations historically has been the lack of relevant, timely information.

The generation of evidence to inform government decisions is a collaborative process that benefits from participation by officials from all branches of government and a range of experts, including researchers, evaluators, statisticians, information technology officers, data scientists, and program managers.

NCSL launched the Center for Results-Driven Governing in September 2020. The Center helps decisionmakers use data and evidence to make budget and policy decisions, and it offers a framework and a set of tools that can be used to allocate resources most effectively.

With support from The Pew Charitable Trusts and an advisory group of state legislators, legislative staff and executive branch officials, the Center released a report outlining evidence-informed policymaking principles and strategies. From defining key terms—so decisionmakers know what’s meant by evidence-based or promising programs—to embedding evidence into the way states budget and prioritize spending, the report “ABCs of Evidence-Informed Policymaking” highlights strategies states have used to get the best results and make the most of taxpayer-funded programs.

The Center researches state actions, provides a forum for sharing best practices and delivers training and technical assistance to states to build awareness and enhance capacity to assess the quality of programs.

In addition, the Center—in partnership with the Council of State Governments and the Policy Lab at Brown University—launched Governing for Results Network in August 2021. The peer learning network engages state legislators, budget directors and legislative and agency staff from 11 states.

Members exchange insights, best practices and lessons learned with peers within and across network states, and they participate and have access to:

- Virtual and in-person convenings and webinars.

- A resource library and online communication platform.

- Customized state supports and assistance.

- Publications and resources to amplify the network’s accomplishments.

Using evidence to inform policy decisions can help policymakers invest wisely and achieve meaningful results. NCSL’s Center for Results-Driven Governing is willing and able to assist with the process.

Brenda Erickson is the NCSL liaison to NLPES, and Kristine Goodwin is the director of NCSL’s Center for Results-Driven Governing.

NLPES Recognizes Exemplary Work

Jason Juffras, District of Columbia

NLPES recently announced its annual awards, culminating a review process that is both inspiring and challenging due to the sheer volume of excellent work. Executive Committee member Drew Dickinson, who serves on the Awards Subcommittee, noted that, “There were many impressive submissions this year for each of the award categories, and I know that the judges found it challenging to pick the winners.”

Outstanding Achievement

James Barber, who retired last year as executive director of Mississippi’s Joint Legislative Committee on Performance Evaluation and Expenditure Review (PEER), received the Outstanding Achievement award for contributions to legislative performance evaluation. During his 43 years with Mississippi PEER, Mr. Barber performed two stints as chair of the NLPES executive committee and even found time to edit this newsletter for 10 years – among many other accomplishments.

Excellence in Evaluation

Michigan’s Office of the Auditor General won the Excellence in Evaluation award for significant contributions to the field of performance auditing.

Excellence in Research Methods

Florida’s Office of Policy Program Analysis and Government Accountability and Kansas’ Legislative Division of Post Audit were honored for Excellence in Research Methods. The award reflects exceptional breadth, depth, and scope of field work, innovative methodology, and technical difficulty or sophistication.

Certificates of Impact

A total of 27 states won Certificates of Impact for reports resulting in documented policy changes, program improvements, savings, or other critical impacts.

Congratulations to the award winners and many thanks to NLPES members who served as award judges!

Jason Juffras is a senior analyst in the Office of the District of Columbia Auditor and serves on the NLPES Executive Committee.

Professional Updates

Alabama Office Has Aces in the Hole

Marcus Morgan, Alabama

Budget decisions can be difficult for a part-time legislature and the challenge can be amplified by limited resources. After the Great Recession and three consecutive years of proration, the Alabama Legislature looked for an approach to make decision-making more data-driven and results-oriented.

In 2017, at the direction of the Legislature and with the support of the Governor, Alabama launched the Alabama Support Team for Evidence Based Practices (ASTEP). Located within Alabama’s Legislative Services Agency, two ASTEP analysts were tasked with finding a cost-effective way to systematically evaluate how state-funded programs were performing. After two successful pilot projects, executive and legislative branch leaders concluded that consistent and sustained success would require commitments from both branches.

The Alabama Commission on the Evaluation of Services (ACES) was formed in 2019 with that mindset and the duty to advise the Governor and Legislature on the evaluation of services, including evidence-based practices. The 14-member cross-branch collaboration is a unique approach to program evaluation and is the state’s first dedicated evaluation agency.

Commission priorities for the upcoming fiscal year are set annually based on recommendations from members and staff. The work plan is a living document combining flexibility with clear direction that consists of the following three phases:

- Current priorities and evaluations.

- A development phase where staff begins researching and scoping upcoming evaluations.

- A discovery phase where potential evaluation topics are examined for potential future work.

ACES’ non-partisan staff of four full-time employees supports the Commission and executes the annual work plan. In the first three years, ACES published seven reports evaluating multiple policy areas including public and rural health, corrections, and education. The Commission used the findings and recommendations to update enabling legislation to strengthen program accountability, align program design with desired outcomes, and expand program reach.

Notably, legislation is not always the necessary course of action. After ACES’ first evaluation on suicide prevention, leaders from both branches met to decide how to implement the report’s recommendations, resulting in multiple agencies acting and improving collaboration to prevent suicide.

As ACES continues to evolve and refine its evaluation process, it remains laser-focused on providing valuable, meaningful, and actionable information to policymakers.

Marcus Morgan is the Director of the Alabama Commission on the Evaluation of Services.

5 Pieces of Advice for a New Analyst

Brooke Kacala, District of Columbia

A year ago, I started my analyst career in the D.C. Auditor’s office, allowing me to learn five realistic and useful lessons that can guide you through your first year as an analyst. You might ask, “Why should I listen to you?” Well, I know exactly how you’re feeling and don’t worry, I asked my supervisor for some advice. Whether or not you learn the importance of being curious, working more efficiently, or becoming a great teammate, remember that you only learn from experience.

1. Don’t sweat the small stuff. You won’t know everything.

As a new analyst, you are what I like to call a ‘rookie.’ Rookies don’t know everything and are bound to make mistakes. But the knowledge we learn from our mistakes is what matters. When you first start as an analyst, you are meant to try new things and venture out of your comfort zone.

By doing so, you will learn about your strengths, weaknesses, and how to grow, because discomfort pushes you to grow, allowing new experiences, opinions, and perspectives to come in. To be successful requires becoming comfortable with being uncomfortable. I know it’s easier said than done. Who doesn’t love their comfort zone? Binge watching a season of Ozark is way more relaxing than making mistakes. But it doesn’t allow you to take the risks needed to become a successful analyst.

2. It’s finally okay to be nosy!

Analysts are infamous for being detail-oriented, and always asking questions. We always want to know the ins and outs of every policy, program, and so on. As a child, my parents always asked me, “Am I being investigated?” as I asked them questions until I fell asleep. Over time, my parents hoped I would become less curious and nosy, but then I became an analyst. Always remember the importance of questions, as they can spark change. As an analyst, we are always stating the facts, but remember that questions can shift one’s thinking, inspire innovation, and stimulate change within an organization.

3. Work efficiently, not quickly.

Forget all the harsh deadlines ingrained in your memory from Intro to Psychology. Analyzing data takes time and accuracy. Learning how to un-learn, and not pack a week worth of tasks into two hours is difficult, but here are some tips I learned in my first year as an analyst. First, keep ongoing lists of tasks you need to complete. Second, create ‘fake’ deadlines, making it a pattern to complete work before it is due. Lastly, make time for yourself, and finally binge watch the entire season of Ozark. Having a healthy work-life balance will increase your productivity and engagement since you won’t crave time in your comfort zone… as much.

4. There is no “I” in team.

Teamwork does not go away once you graduate college or finish your internship. However, it does get better (yes, I know, that is hard to believe!). Having team members is extremely beneficial for a new analyst, especially because you can ask them questions you’re afraid to ask your supervisor, such as “What does COB mean?” Working on a team also helps you grow professionally because gradually you begin to feel safe communicating with your team. In turn, your work becomes collaborative, reducing stress and pressure.

5. Analyzing data is not intuitive. That’s why we have jobs.

Analyzing data is difficult, and it is our job to make data understandable and reader- friendly. When I started as an analyst, I was overwhelmed by the amount of research I had to read through. My colleagues, all with 10+ years of experience, made the job look so easy. I’d question whether I was doing my work correctly, and believed I had no right being there. After some time, I realized that I was hired for a reason, and deserved to be there. Analyzing is our job, and it is not easy, but if we weren’t good at it, we wouldn’t be analysts.

Brooke Kacala is an analyst in the Office of the District of Columbia Auditor.

Should You Pursue a CFE?

Jason Juffras, District of Columbia

Auditors and evaluators play a critical role in deterring and detecting fraud. NLPES members who shared their insights with The Working Paper report that becoming a Certified Fraud Examiner (CFE) helped them hone their fraud-fighting skills.

The Association of Certified Fraud Examiners awards the CFE to those who pass a 400-question exam covering fraud prevention and deterrence, financial transactions and fraud schemes, investigation, and law. A score of at least 75% is required in each category, and there are additional requirements for education and professional experience.

Mike Jones, audit manager for the West Virginia Legislative Auditor, describes the CFE exam as “difficult but not impossible … Those who put the time and effort in to study and learn the material will succeed.”

The test fee is $450, but there are additional costs for exam preparation. Applicants can prepare through live or virtual instruction in a class setting or by self-study.

Vicki Hanson was a teacher before joining the Arizona Auditor General’s office, where she now directs the Division of School Audits. She reports that pursuing a CFE enhanced her knowledge of accounting as well as her professional credibility. Being part of the CFE network has also helped Hanson stay current on fraud schemes.

Vicki Hanson was a teacher before joining the Arizona Auditor General’s office, where she now directs the Division of School Audits. She reports that pursuing a CFE enhanced her knowledge of accounting as well as her professional credibility. Being part of the CFE network has also helped Hanson stay current on fraud schemes.

Marcus Morgan, director of Alabama’s Commission on the Evaluation of Services, describes obtaining a CFE as “time-consuming” but “worth it.” He bluntly notes that, “Credentials matter.” Morgan adds that the CFE offers access to a “huge network of talented people” and training resources.

Mark Lee, investigative analytics audit manager for Michigan’s Auditor General, credits his CFE training for building his knowledge of what constitutes fraud and how internal controls enable or hinder fraud. He spent five to 10 hours per week over six months to prepare for the CFE test using a self-study course.

Soon after earning a CFE, West Virginia’s Jones used the training to help expose embezzlement by a volunteer fire chief. He has also drawn on the CFE to assess agency fraud response plans and has applied fraud detection techniques such as Benford analysis in his audits.

News Snippets

Did You Know?

The U.S. General Accounting Office (GAO) first issued its Government Auditing Standards, better known as the “Yellow Book,” in 1972.

GAO managers had proposed naming the book, “The Golden Rules of Auditing,” matched by a gold cover. Elmer Staats, who was Comptroller General at the time, objected to the presumptuous nature of the design, and the governmental auditing standards were downgraded from gold to yellow.

In 2004, the Congress changed GAO’s name to “Government Accountability Office” to reflect its broader role in performance auditing and program evaluation, while retaining its well-known acronym.

Let's Get (L)inked

The NLPES LinkedIn Group was created to promote the exchange of ideas among NLPES member offices and staff, and to inform members of upcoming events.

As of this writing, we had 87 members from Maine to Arizona, but we’d like to expand our reach. Mississippi’s PEER office is, well, without peer: it has the most members of our LinkedIn Group (10).

The group is open only to NLPES members. There are two ways to verify your membership and join the group.

Option 1

Hit the “Request to Join” button on the NLPES LinkedIn Group page. If a group manager can verify your eligibility through your LinkedIn profile, the manager may accept your request. If sufficient information is unavailable, the manager may direct you to Option 2.

Option 2

Send an email requesting to join the NLPES LinkedIn Group to Brenda Erickson, our NCSL liaison. In the message’s subject area, type “SUBSCRIBE to NLPES LinkedIn Group.” Please include the following information:

- Name

- Job title

- Audit agency/organization name

- Phone number

- Work email address

- LinkedIn address (you must be registered with LinkedIn to join)

Websites, Professional Development and Other Resources

Audit Report Database—NLPES has launched its updated, more user-friendly audit report database, which will provide easy access to reports from colleagues around the country. This database includes five years of reports and is organized into 28 categories such as agriculture, education, public health, and transportation.

The audit report database will be updated quarterly. To include your latest reports, please e-mail Brenda Erickson with the report title and ID number (if any), date of release, office name, relevant categories, and link. Please use “Report Listing” in the e-mail subject line.

Linda Triplett, a former chair of the NLPES executive committee who worked at Mississippi PEER for 40 years, was instrumental in developing this initiative.

NLPES Listserv—The NLPES listserv is an email discussion group for NLPES members. Users can query other states about evaluation work, receive announcements of evaluation reports and job opportunities from other states, and get notified when the latest edition of this newsletter is available!

To join the listserv, email Brenda Erickson, NCSL liaison to NLPES, with the subject “SUBSCRIBE to NLPES Listserv.” Include your name, job title, agency name, mailing address. phone number and email address. You will receive a “Welcome” message once you are added to the listserv. This link provides guidance on how to post a message to the listserv.

Please note that a new NLPES listserv procedure means that any response to an NLPES listserv posting will be sent to all subscribers. Therefore:

When you post a message to the NLPES listserv, please include an email address in your signature block to which a separate, private response to your inquiry may be sent.

If you wish to respond to a listserv posting but do not want your response sent to all listserv subscribers, do not “Reply” or “Reply All” to the initial posting. Instead, please send a separate, private email addressed to the person who posted the original inquiry.

IT Evaluators Form Subgroup—NLPES has formed a new subgroup for information technology (IT) auditors and evaluators to engage in information-sharing calls. The group's first call was held Tuesday, July 26.

During its introductory call, the group discussed what information system/information technology audits are, who decides if IS/IT audits should be done and why they are important. Topics for the group's next call weren't determined, but the group may delve further into assessing the reliability of computer-processed data and evaluating agency access controls.

If you would like to join the NLPES IT/IS subgroup, please email Brenda Erickson.

Staff Happenings

Joel Alter retired after nearly 40 years in Minnesota's Office of the Legislative Auditor. He served most recently as director of Special Reviews, handling concerns or allegations with a narrower scope than a typical audit. Equally important, Joel generously shared his knowledge and insights with NLPES throughout his career. He served on the NLPES Executive Committee from 1999 to 2004 and as NLPES Chair in 2001-02.

Michael Tilden became California’s Acting State Auditor in January 2022. He has worked at the State Auditor’s Office for more than 28 years.

Lucia Nixon left her position as director of Maine’s Office of Program Evaluation and Government Accountability to join the legislature’s Office of Fiscal and Program Review. Peter Schleck has been hired as OPEGA's new director.

Tom Hewlett is the new deputy director of Research and Communications for Kentucky’s Legislative Research Commission, replacing long-time LRC staffer Teresa Arnold, who retired. Tom will oversee the Legislative Oversight and Investigations Committee staff.

Longtime employees of the Georgia Department of Audits and Accounts—Leslie McGuire, director of the Performance Audit Division, and David Arner, one of the division's deputy directors —announced their upcoming retirements. Both have been with the office for almost three decades.

Put These on Your NLPES Radar

NCSL Legislative Summit

The largest bipartisan gathering of state legislators and staff, NCSL’s Legislative Summit took place August 1-3 in mile-high Denver. Find recordings of some sessions—such as “How to Find and Keep Talent in the Great Reshuffle,” “Five Big Ideas to Improve Crisis Response,” and “Supreme Court Center Stage”—on the NCSL website.

Staff Hub ATL 2022

In place of our annual professional development seminar, NLPES will participate in Staff Hub ATL, from October 10-12 in Atlanta. NLPES will be among six NCSL staff associations involved in this joint effort to share knowledge and build connections among legislative offices. Topics to be discussed include strategic communications, time management and productivity, and the economic outlook and state budgets.

Upcoming Member Survey

This fall, the NLPES executive committee will survey the membership to get feedback and ideas on professional engagement, communication methods, this newsletter, and other topics. Please respond so we can practice what we preach – continuous improvement!